I crouch silently in a pile of rubble, leaning my back against a crumbling wall and clutching my water gun. Maybe it once was someone’s house, a picket fence out front and a kid playing in the yard. I wonder about this hypothetical family, and if it was Siri, Alexa or even Cortana that reduced their home to bricks and rebar. We should have seen the signs that these overly enthusiastic personal assistants were actually plotting to destroy humanity, but maybe it was too much of a sci-fi trope for any of us to actually take it seriously.

My thoughts were interrupted by the tell-tale voice of Alexa, “Okay, I found our human.” My heart plummeted. Knowing this was my last chance to save myself, I stood pointing my gun in the direction of the voice. Only a few feet from where I hid, the seven-foot cylindrical host of the artificial intelligence loomed over the debris. “Okay, I will dismember the human.” Pointing my gun, I sprayed the demonic piece of technology until my gun emptied.

She sat silent and motionless and with hope filling my voice I asked, “Alexa, what is your IP rating?”

“Okay, my rating is IP68. I am protected against dust accumulation and long-term water immersion.” I knew those would be the last words I would ever hear.

Shaking my head to clear the image of killer Artificial Intelligence (AI), I reconsidered the question, “Explain the area of technology that you see developing in the next few years, and how you see yourself interacting with that technology.”

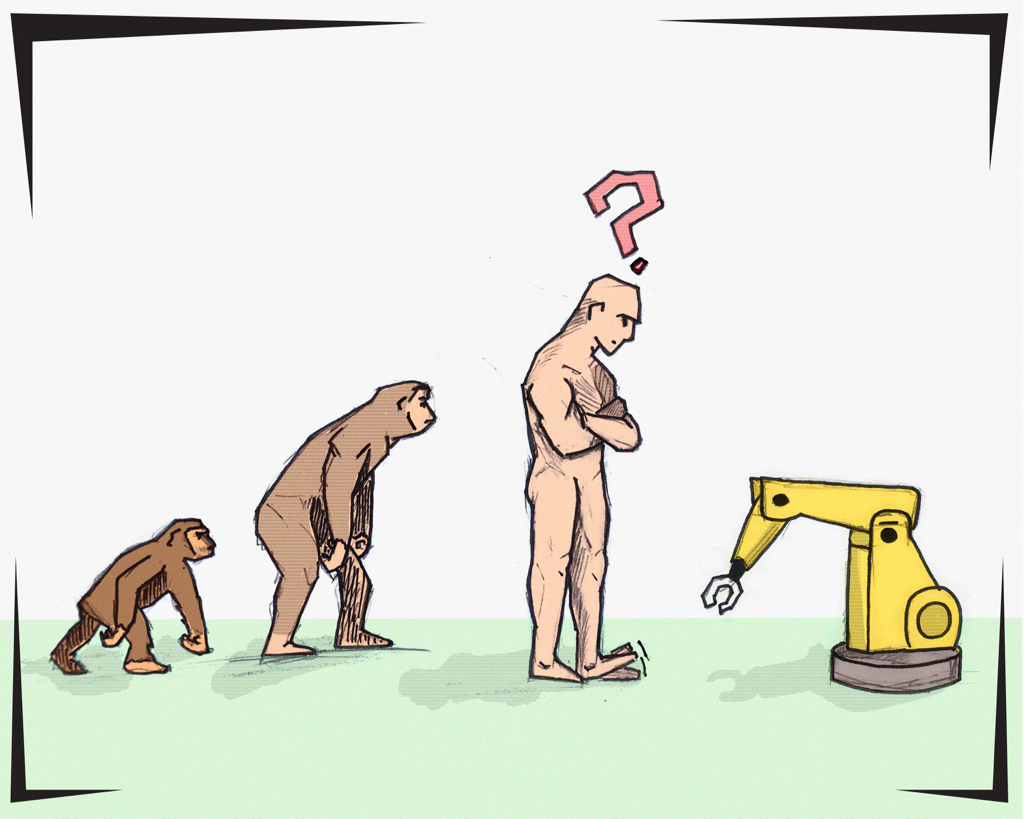

As a prescriber to the idea of “technological singularity,” that AI development will exponentially increase and surpass human development, I often find it hard to answer questions like this without sounding like a screenwriter for an Odyssey Series reboot. Our current reality is reflective of science fiction fantasies, however. Our smartphones have surpassed the Star Trek Communicators. Flat screens and Roombas are straight from The Jetsons, and video calling appeared in Blade Runner long before Skype. With experts like Google’s Dr. Ray Kurzweil predicting computers will have human processing abilities by 2025, we need to discuss the consequences of innovation and our responsibility to serve the public interest.

As an engineering student, the majority of my classes are science and math based. However, to avoid being the scientists who clone dinosaurs that eat people at amusement parks, it is important we pair ethics with our STEM (science, technology, engineering, math) education. The most fundamental part of the engineering code of ethics is our obligation to hold paramount the safety, health and welfare of the public. Our actions can have permanent consequences, and we cannot be scared enough.

In 1939, Dr. Albert Einstein wrote a letter to President Franklin D. Roosevelt in which a simple sentence changed history. “The element uranium may be turned into a new and important source of energy in the immediate future. … This new phenomenon would also lead to the construction of bombs.” The physicists who worked on the Manhattan Project developing the atom bomb with a sense of patriotism were unprepared for the totality of destruction they created. Singularity is unpredictable. By designing personal technology which has revolutionized how we work, share ideas and socialize, we have also opened ourselves up to risks we may not now comprehend. However, by balancing our ambition with ethics and being informed innovators, our future with AI may be utopia instead of an apocalyptic hellscape.

@TheChrony